Planned for a long time already, I finally managed to conduct an interview with the social scientist Arne Maibaum from NotMyRobots about NotMyRobots.

I started the interview with only a few questions. However, the interview lasted almost an hour and was fascinating. We touched several topics I expected, like HAL, the Terminator and robots that take our jobs, but also surprising topics like sexism, racism, and epistemology. Or like Arne Maibaum said at the end of the interview: “That’s what you get when talking to a social scientist”.

Me: Hi, Arne! For all those who don’t know it yet: What is NotMyRobots anyway?

Arne Maibaum: We have a little description in our Twitter profile and on our website: Especially on Twitter we saw that an incredible number of horrible, unrealistic, but most of all really wrong visualizations are used for popular scientific robotics articles. But sometimes also in teasers to scientific articles. These are unrealistic images in articles about robots, but also – lately more and more – in articles about AI, which are often illustrated with robots.

“We”, that is Philipp, Lisa, Laura and me. Phillip and I work at a robotics group at the TU Berlin. Laura and Lisa work in Munich on the topic of robotics from a social and cultural-scientific perspective. Phillip and I are both sociologists, who ended up in human-robot interaction. All of us always collected these kinds of images. In other words, all of us have had a kind of gallery of horror anyway. Lisa also dedicates her work to robots in the pop culture of American history. That means she was able to use this in her dissertation.

Starting with this, we thought that we had to somehow make this public in some way. This is why we created the Twitter account @NotMyRobots, which tries to point out when these wrong icon pictures are used. If somebody shows us such pictures by using the hashtag #notmyrobot, quite some people do that now, we re-tweet and collect them. Depending on the picture we also try to point out with our own critical comments why we don’t like it.

How did these people find you? So how did it happen that there are so many helping out now?

I guess many robotics people are really bothered by that. But I also think that many people from the humanities are extremely sensitive to this. Many of them have always seen such pictures and shook their heads. This results in a certain number of people who see this problem and like our initiative. Then they use our hashtag or tag us.

That’s an interesting observation. I’ve been noticing these images for years, too, at least since I started working in robotics. I also roll my eyes, but I never really reflected that this is really harmful. Why is it so bad after all?

Well, what we always assume is that technology does not start from zero and robots are not simply built the way technology recommends. But, and this is the sociological perspective, technology is always man-made and always socially constructed. As such, it is of course also subject to negotiation of how society views robotics and robots. In the communication about how such technology is constructed, visualization naturally plays a major role. With care robots, for example, we see two expectations that are strongly linked to visualizations:

First, there is always the Terminator robot, which for some reason or another keeps popping up in robotics articles. This is accompanied by the expectation that future robots will be ice-cold and potentially deadly machines, with metal grippers that want to reach out to us. This is, of course, the horror scenario, which stirs up fear and leads to people not wanting to have anything to do with it.

One of my favorite examples is from a project I worked on as a student. We had autonomous transport systems that were used in hospitals to transport laundry boxes, for example: the Casero robot. These were then to be used on an oil rig, i.e. for men drilling explosive material over many kilometers. But they strictly refused to take such a robot to their oil rig because they were afraid it would kill them all. Of course, this image certainly comes from science fiction and this fear of being at the mercy of such a machine. And when we then see articles that put such an image under ‘robots should help us’, it is of course inherently problematic for how technology is perceived. This of course has an influence on funding etc.

My idea for the interview with you or your team arose from my impression that robotics is presented as too positive and too powerful. If I hear this correctly from you, then you see more often that robotics is portrayed as too violent or too brutal?

No, that was just one aspect: the Terminator visualizing horror, so to speak.

The second aspect is of course the much too positive and much too advanced robot image. In this case, the main concern is always that robots are represented as humanoid service robots. This goes so far that articles on Artificial Intelligence (AI) are also illustrated in this way. If, for example, AI is supposed to help in the fight against the new coronavirus, then the illustrations show a humanoid robot with filigree hands and even uses human technology.

Right, sitting at the computer for example…

The pure insanity! So, on top of the science fiction horror expectation, there is this humanoid performance expectation, namely that we will soon have robots like the ones you see in the pictures: slim, very human-like robots, where humanity is only concealed by white plastic covers on human body parts. That is creating a completely false expectation as to the state of robotics and what exactly the research direction is.

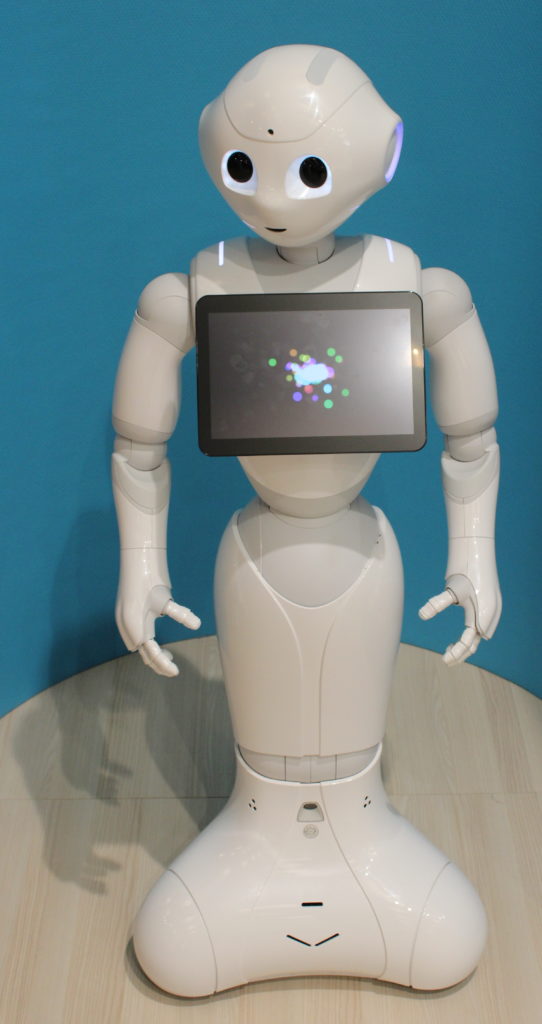

It’s also about the human resemblance, which of course also raises expectations. You don’t even have to take the agency pictures of these white, smooth humanoids for that, you just have to look at the robot Pepper. Pepper is the absolute synonym for what is expected of humanoid robots and what they are actually unable to achieve.

You have now mentioned AI several times. Is the NotMyRobots problem increasing in your perception due to the current AI trend?

Yes, absolutely. Of course, it is also difficult to illustrate AI, I have to admit. What we have been observing more and more over the last two years is that discussion turned away from robotics to AI. However, if there are articles about AI helping us to solve a certain problem, the picture is usually a robot. This is a strange thing, because it shows embodied artificial intelligence in the form of robots, which neither represents the state of the art in any way, nor does it do justice to potential development in robotics or AI in any way.

I’ coming back to how your project NotMyRobots started. You said all of you had started collecting these examples already due to your scientific background. Was there any specific reason for this?

I think, just the horror!

For some of the pictures we saw, there is just nothing left but to store them in astonishment and put them into the poison cabinet. I think the most horrible ones are those in which robots are suddenly gendered. The competent robot use to be very male robots, with male facial features. But I find it even worse when there is an unnecessary femininity. Robot depictions with breasts for example, which do not represent any technological reality and target sexist categories.

This is of course terrible, especially for sociologists, because robots and cyborgs also carry a promise of a world that could challenge gender stereotypes. Humanoids in particular are of course always very much caught between gender and gender stereotypes. The male-strong, heroic robot on the one hand, the Terminator embodied by Arnold Schwarzenegger as a clichéd image of masculinity.

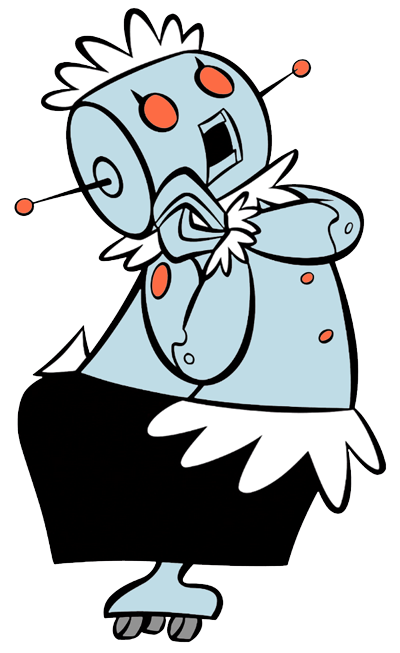

On the other hand, we find many service stereotypes. It is no coincidence that Rosie from the Jetsons can be found on many of the presentation slides in human-robot interaction. Especially in the case of household robots and care robots, the femininity that we know in care professions are projected onto robots. You find images of household robots that wear housemaid aprons and whose poses are also clearly associated with performing service activities.

On the one hand, this is a pity, because robotics is a technology that could enable post-gender identities. This is certainly not a central concern of current robotics development, but it is interesting for us. Pepper, for example, was programmed to answer whether it is a boy or a girl, at least in its German and English version. So this seems to be an immediate question that is triggered by Pepper. I find it interesting, for example, that we obviously have to add a gender to this technical object, otherwise we have no category to talk about it. Pepper obviously fails in this categorization, though.

This is of course exciting from a sociological perspective: humanoid robotics can sometimes question common categories and make us ponder. If we then take images like the robotic cleaning lady or the robotic housekeeper or the sex object robot in absolutely non-sexual contexts, then that is something to think about. This has implications for the handling of technology in the long run. If we perceive robots as subordinated technology, this often implies a very strange way of dealing with technology. Especially when considering that all robotic technology is dependent on entering into a rather cooperative relationship with us. At the moment, for example, we can see that the actual adaptation is still happening with the humans: if robots are to function, the environment has to be structured and robotized – our interaction with them has to be robotized. So it naturally raises false expectations when we see robots in the picture that are perfect maids.

I would never have thought that I would come across sexism and post-gender in robotics so intensively. I don’t usually come across this so much in robotics as an engineering science.

I think that’s okay. I am a social scientist and I know that our engineers usually have completely different concerns in mind. But the concerns about these images do meet on a certain level. The concern about what technology can do and how the technology is presented is something I find immediately reflected in our roboticists.

Absolutely! For you and you from NotMyRobots this has a scientific background, right? For example in the context of your dissertation?

With Lisa and Laura it is certainly in the context of their dissertation, yes. My dissertation is more about robotics than science and especially about how robotic competitions play a role.

So this is more of a hobby for you.

In the end, of course we think about it scientifically. For example, we have already been invited to give a talk on the Open Lab we have at MTI-engAge. The idea to start such an Open Lab was of course born out of exactly such considerations: that robotics is struggling to be the discipline that either builds the Terminator or takes away people’s jobs. This is a mortgage that one takes with every robotic project.

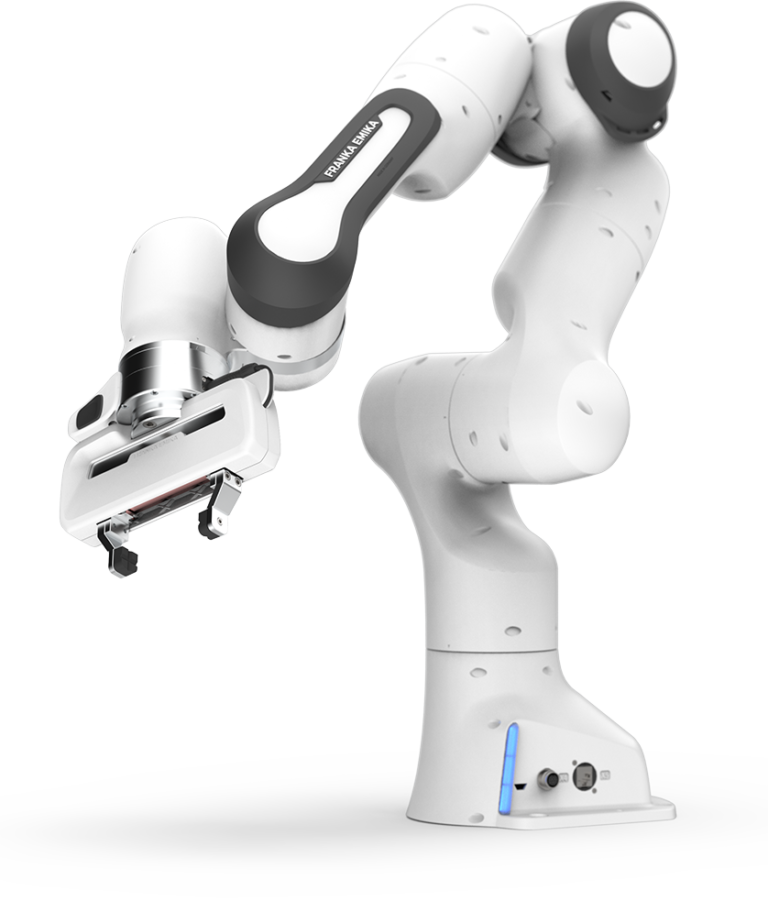

In the project, our lab was therefore open to everyone once a month. It was very well-received and people came to us with the expectation of exactly such visualizations: To see robots that can do everything. They were of course disappointed that Pepper stood in the entrance and tried to say hello, but then could do very little and didn’t shake his hips. So there is this very nice tension between on the one hand insane expectation of what the robot can do and on the other hand there is our Franka Emika handing over balls, which is insanely unspectacular at first level.

There’s also a difference between Pepper, which nobody really likes in the lab, and Pepper, which you can just pet a little. And then we have this great machine that can do a lot and which lots of thought had been put into. It takes a ball from the table and hands it over to the person and that is a disappointment of these expectations.

But then there is a second level, namely to think about what is complicated in robotics and what is so exciting about such trivial tasks for current robotics. There is usually such an effect that people realize how difficult it is to build robots. If you are in a laboratory and look at the movements and what the robot can do and what is state of the art, it works incredibly well. After that, most people are actually enthusiastic again. That gives such a nice curve of robotic enthusiasm.

Of course, we could only afford this because we had a spacious laboratory, many robots and people who were interested in this dialogue with the public. And such dialogues are rather rare. Of course, there are usually trade fairs and open days as possible points of contact, but there are – and we have measured this – rather those who are already enthusiasts and are enthusiastic about it.

Well, it is somehow a hobby, but it was also part of our professional reality to be confronted with exactly these expectations. For us, it is still interesting to find a way of dealing with them. In two cases we have written to editors and – we are proud of it – they have changed the symbol images in their articles.

Oh, cool!

That is naturally great. And as sociologists we are always happy to criticize, of course, but it is much more difficult to name alternatives. We still find that difficult. We had a colleague who is planning an exhibition and an art object on how to illustrate robotics differently and better. Due to Corona, this is currently on ice. But the next step, of course, would be to think about what images we can create that are more appropriate to the technology and the actual direction of the technology.

At this point I’ll just slide in. As a robotics engineer I should be able to do it better myself, shouldn’t I? This refers to this blog, of course, as well as to the Wikipedia article ‘Robotics‘. Please have a quick look at it. The cover picture, the Shadow-Hand in the upper right corner of the article, I put there years ago. What do you think about it?

Well, the picture at least shows a technological state of the art. At least it’s showing actuators and muscles. But I’m not sure if the hand could actually grip the light bulb in its movement. I dare to doubt that.

So this would again be an image that does not represent the state of robotics realistically?

Actually, I don’t think the picture is that bad. You can see it being inspired by biological humans, and it looks very filigree. But grasping with a human hand is indeed something robotics actually is dealing with at the moment. And the hand looks very technological. So I don’t think the picture is that bad.

Okay, that’s a relief.

This is also a very good hand-over to my actual core question, which is the trigger for this interview with you. With NotMyRobots you put your finger in the wound of journalists and editorial offices that use these false symbol images. In the article I am currently preparing for another blog, however, the point is that I think the problem is largely homemade. That robotics science itself causes and fuels this distorted image. Do you see it that way too?

Maybe it’s because our robotics lab is relatively woke up in this respect and regularly things have even been broken off explicitly when the impression was created that the robot can do something that it actually cannot do. But this is probably what we do because it is not done in many other places.

In robotics itself I did not encounter this problem so much. Maybe I am blind myself, so I am very curious about your article. In the robotics, in which I was directly involved, I made sure that there is a lot of communication about what is being done. There is often a video attached to the publications, which tries to make it clear what is being done. Then I also learned that in videos there are signs by which one can recognize whether the video is manipulated or not. Classically, for example, a person in the background of the video, where you can easily see if the video is fast-forwarded or interrupted.

But where I think it clearly appears is in human-robot interaction as a discipline, and our lab owes something there, too. Many of the experiments are carried out in the Wizard-of-Oz variant. Wizard-of-Oz is fake, if you will. When I type in dialogues, when I control movements, it naturally hides the actual state of the art and is a euphemism for what you can actually do.

I saw this recently during the long night of science. There, transfers are made between robot and human, and it is claimed that the robot would be able to recognize this. They even say something about the sensors. But in reality the operator sits in the next room and controls the robot via a camera. This is of course bad scientific practice.

But this is precisely because of the wrong expectations and because the current state of robotics is often too unspectacular. The things that are interesting from an engineering point of view are often simply not very visible or spectacular from the outside. If you want to impress or get funding, you are sometimes forced to make such compromises in the presentation.

From my point of view, however, there is a certain difference between the popular scientific approach, which is quite horrible, and the semi-scientific approach, which is somewhat less horrible. But I have rarely experienced really scientific misconduct among colleagues in robotics.

I think it’s good that you see it so positively. I don’t think I’m quite so positive about it. What I would count – this may not be a scam, but it’s at least a euphemism – are many videos of robotics. I try one thing 50 times and then upload the one successful time to YouTube. Then I talk about it and maybe even write a scientific paper about it. I think we encounter this in robotics science, and it is a similar practice for me.

Yes, I find that incredibly exciting, because I am actually writing about it in my dissertation. This will probably be my project for the future: the epistemic question – here comes the sociologist again, sorry – how scientific evidence is generated in disciplines that are at the interface between science and engineering. I don’t think this is well described in science theory yet. The incredibly interesting thing about robotics for a sociologist is that you create the object of your own research during the research.

Oh, that’s right. Interesting!

This is something that is absolutely unusual in most scientific disciplines. There are of course similar examples: Scientists who build the electron microscope out of the need to do their research on atoms. There, however, the built object clearly has instrumental character.

But the robot we build is more than an instrument for further research. That’s why it’s incredibly interesting how evidence is created there. I clearly see video as a new medium and I don’t know it from any other science. You often see with robotics papers that on the homepage you can find the link to the IEEE paper that links my YouTube video. In the video I let my robot drive three times, maybe you can see the curves of the movement. This is incredibly interesting because it is a new way to create evidence and communicate science. Of course, it is exactly this effect that is interesting, that one time functioning is often enough as a kind of proof of concept. This contradicts the principle of scientific repeatability in actually all other sciences. But of course this is also due to the fact that the setup of the experiments is often of such a high complexity that the unique functioning in a verifiable form – and this is the video, for example – is sufficient to be a proof of concept.

I’m not sure yet if it is simply non-scientific or if it is scientific misconduct or if it is simply due to the state of robotics as a science.

I have to say in honor of robotics that some journals and conferences have recognized this problem. Therefore, there is a growing demand for a certain reproducibility of the experiments. For example by having to deliver the software or by having to deliver the whole ROS installation as a virtual machine. Of course this is still a problem due to the complexity you mentioned. For example, not everyone can buy the robot to reproduce the experiments. Sometimes the systems are simply so complex that it is not possible to do it with reasonable effort. But I think this problem is seen more and more and is being worked on. For example with robotics competitions, where you have to show it live, that’s your topic again.

This is actually one of my chapters, yes: the competition and the live demonstration as an experimental status. That’s where we make the connection to NotMyRobots and the public. If we have public live demonstrations of the status of robotics, it is of course extremely useful for communication. This is something that goes back to very old forms of technology demonstration that we had in the 19th century and the beginning of the 20th century. For example Lindberg, who demonstrated what his plane could do in front of the Parisian public. Of course, not everyone understands the airplane as an incredibly complex system. The situation is similar in robotics. We have an insane number of disciplines there. Even the people who ultimately implement the behavior cannot understand the entire system.

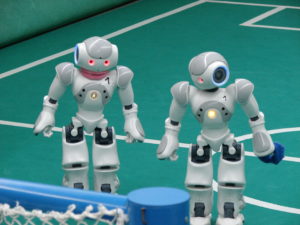

I see this kind of demonstrations in robotics for example at the RoboCup. When I go there and see the robots playing soccer, it is of course also for the purpose of seeing: these robots are not terminators. That’s probably why the Naos are much more popular than the robots of the Middle Size League. Especially the Naos show the state of the art very well and how much work it is to do something as trivial as finding a ball, finding a goal and then kicking the ball into this goal. You can experience such things at the RoboCup. So these events are well suited to create a connection between people interested in science and robotics.

For me as a roboticist these RoboCup videos are often terribly frustrating. For example, when you see the umpteenth time from RoboCup@Home, how a moving barrel approaches a table very slowly to grab a single can of cola, it frustrates me tremendously, and I think: somehow robotics has been standing still for years. But from the point of view of science communication, of course, this is just right to give a more realistic picture. These scenes show that even seemingly trivial tasks that can be performed by a human with eyes closed are incredibly complex. And here we are just now in robotics: performing simple tasks even in a structured environment is still rock-hard.

So, we’ve made some pretty big turns in this interview. Now I have two or three questions at the end that don’t fit in perfectly.

That’s how it’s supposed to be with the last questions of an interview.

This problem you show with NotMyRobots, wrong symbol images, has not only robotics. Just think of the classic symbol images of hackers in hoodies. This probably applies similarly to almost all disciplines. If you know your way around and work in these disciplines, you will probably clench your hands over your head. So should there be NotMyHackers and NotMyX… besides NotMyRobots?

I think NotMyHacker already has something like that, people who ironize and depict the classic hooded sweatshirt hacker. But from my perspective, I don’t know any images that represent sociology, for example. Maybe we are simply less represented in such articles.

There is something else we could do in robotics as well. If, for example, a sociologist is interviewed, then a picture of them is shown, and not a symbolic picture as in robotics. This would perhaps not be wrong in robotics either, that the faces are shown behind the technology that is being depicted. I think that biologists find similar things in their articles.

But I think that this strange science fiction realism has the bigger problem in robotics, because robotics has always been associated with science fiction. When talking about robots and robotics, it often doesn’t take three sentences until someone mentions Asimov – Godwin’s Law of Robotics, so to speak. This means that the science fiction elements are much clearer in robotics than in other sciences. It is this oddity of fantasy and very concrete representation that makes robotics unique. I don’t think that in any other discipline this image exists so strongly. When we see humanoid robots, we have an idea of them that is pop-cultural. Humanoids are very present in our pop-cultural imagination and in our pop-cultural memory. At least in a western context I think they are much stronger than in other disciplines. If you google stock photos – and I’ll probably try this as an experiment later – robotics is probably the discipline where most horrible stock photos are found.

That may well be true.

Alright, let me conclude with a question that you have already touched on two or three times. What should I as a blogger, journalist, editor do better or consider in the future?

There should be a gallery of horror of graphic robotic visualizations that are no longer used. So for example Terminator and HAL. They are out! They are simply no longer used, unless the text is actually about the Terminator or HAL. Also, everything that is strongly gendered and – I think this is one of the most terrible things – this white robot, which also has this strange purity/whiteness. They all get fired, too! I think this is the most popular stock photo and the designer probably got pretty rich with his White Robot Guy, if the pictures are not pirated. This one will have to go, too!

When we illustrate AI, it must be clear that AI is not a thinking robot. In general, AI articles should not be illustrated with robots, unless the artificial intelligence is actually embodied in robotic systems.

I would advise using images of the robotic systems you write about. They usually have pictures. At some point we made it so that we told our press department to come by our lab if they wanted to have pictures. They then took pictures of the actual robots. They often still show things they can’t really do. But at least it is an existing robot that is actually used to do the research that is being written about.

If the teasers of the articles should look nice, there are also a lot of pictures that are abstract. Robots in the form of abstract art I find less bad than the pictures that show concrete but false visualizations.

In conclusion, I would say that an article on AI does not need a robot picture, and a robot does not always have to be a humanoid. It would help a lot already if robotics is shown as a technology. That’s why I don’t think your picture on Wikipedia is so bad. If there are no veneers, but if actuators are shown, if the mechanism are visible, this would be very helpful.

Alright, Arne. Thank you so much!. We’ve been talking for almost an hour now, which I was not expecting at all. However, we talked about many aspects that I did not expect as well: not only sexism in robotics, but even hints of racism in robotics.

That’s what you get when talking to a social scientist! These are the topics we talk about all the time and that is also somehow our homework. Of course, we also do this to bring our own scientific legitimacy into the form of our criticism.

I think especially this white robot is absolutely not harmless from this perspective.

This interview was conducted in April 2020 by Arne Nordmann and is also available in German.

1 thought on “#NotMyRobots – Interview with Arne Maibaum”